|

Instead of seeing a “char” as a single Unicode character, Java designers thought it best to keep the 16 bits encoding.

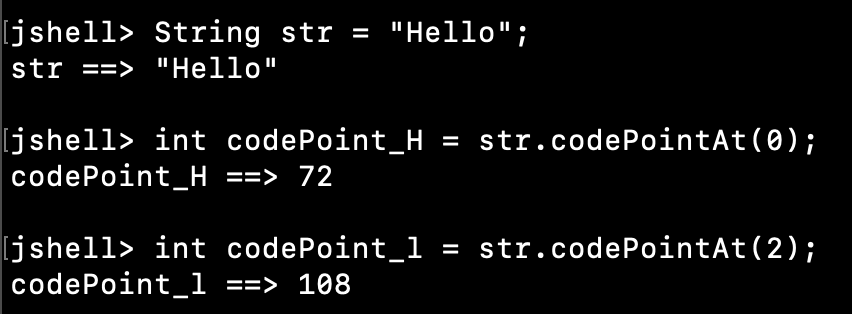

In order not to break compatibility with older programs, Java chars remained encoded with 16 bits. So they added more bits and notified everyone: “starting now, we can encode a character with more than 16 bits”. However, when integrating large numbers of characters, especially ideograms, the Unicode team understood 16 bits were not enough. All Java char has always been and is still encoded with 16 bits. Thus, Java designers made the decision to encode Strings with characters encoded on 16 bits. This is about the time when Java came out in the mid-1990s. Then, Unicode brought this to a brand new level by encoding characters on… 16 bits. And code pages were defining how to show any character between 128 and 255, to allow for different scripts and languages to be printed. So the extended character table came, bringing a full new range of characters to ASCII, including the infamous character 255, which looks like a space, but is not a space. While this is fine for most English texts and can also suit most European languages if you strip the accents, it definitely has its limitations. The origin of type “char”Īt the beginning, everything was ASCII, and every character on a computer could be encoded with 7 bits. But I think after reading this article, you will never use the type “char” in Java ever again. So, the point is, once you convert your character offsets to Julia String indices, you are still working with characters.The post title may be blunt. Substrings work similarly: you give them code-unit indices, but they still give you the whole string of Unicode characters: julia> s # a copy In contrast, the code units (bytes for UTF-8) are retrieved by the codeunit function: julia> codeunit(s, 3) (Note that the return value of s is a character represented by Char, a 4-byte object corresponding to a Unicode codepoint.) String iteration is also over characters: julia> for c in s 'γ': Unicode U+03B3 (category Ll: Letter, lowercase) 'β': Unicode U+03B2 (category Ll: Letter, lowercase) 'α': Unicode U+03B1 (category Ll: Letter, lowercase) Julia string indices retrieve characters, not code units, it’s just that the indices are not consecutive: julia> s = "αβγ" The Julia design decision apparently was to use indexing to retrieve the elements of representation, not the actual elements a string has: characters.

Now in order to access the string subsequences that correspond to those offset ranges, I need to first convert these offset ranges into the proper offset ranges for Julia strings, and I think the suggestion of creating a map using collect(eachindex(str)) is probably the solution to this. (This is a key reason why a variable-width encoding like UTF-8 can be so popular.)įor the problem I want to solve it is actually the other way round: I do have a Unicode string (in some encoding originally, but ultimately it will be a “normal” Julia string) and a large list of offset ranges, where the offsets are 0-based Unicode code point indices.

It’s pretty rare to have an application in which someone says “give me the 527th character of this string” where the number 527 just falls down out of the sky. search/replace), you find ranges of characters by first iterating over the string, in which case you also get the indices (regardless of the indexing scheme). Where are you getting the ranges of Unicode characters from? In most kinds of string-processing operations (e.g. (on the other hand it does not exist in Java either where strings are represented using UTF-16 code units with surrogate characters). I guess I would be prepared to actually implement this though it looks like something so basic that it feels like it should exist That should be achievable by simply creating an index map from code point indices to code units that are the beginning of code points (and another one for the other way).

So codepoint(juliastring, 3) or similar should give me the actual third codepoint, which could be the 5th code unit in Julia. My issue with the julia strings is not the representation - I do not care about it really, but the way how one indexes/retrieves the elemets of the string: the Julia design decision apparently was to use indexing to retrieve the elements of representation, not the actual elements a string has: characters.īut it should be possible to at least ADD a way to do this by providing a “view”. Interesting – do you happen to know if it is possible to not just convert between the UTF32 representation from this library and the default Julia strings back and forth, but more importantly also convert the offsets?Īctually, even more importantly: does this library actually literally convert the string representation? Because I would not actually want that – All I need is s “view” of a Julia string (no matter what the representation) which allows me to index Unicode codepoints.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed